UrbanKaoberg.com - Part II: Revenge of the "Mullet"

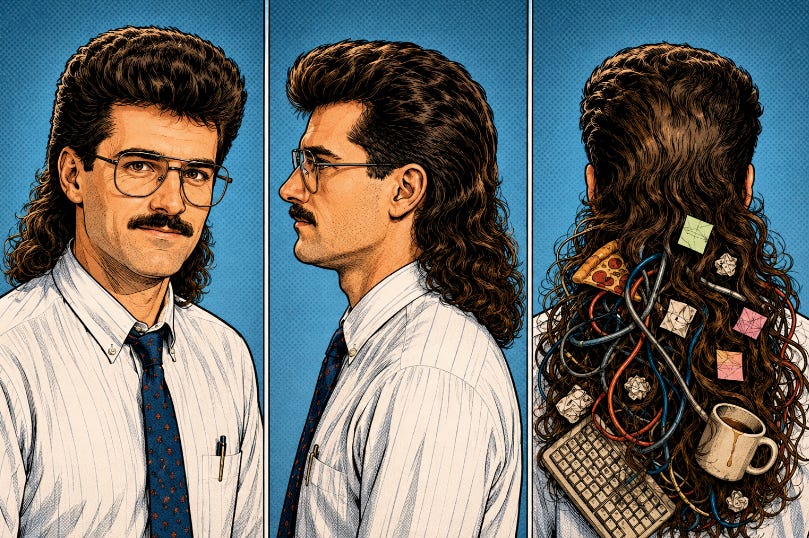

Remember the Mullet of the ’80s — “business in front, party in back”? I think LLM coding produces “Mullet Code”: professional in front, KAOS in back. This piece is about taming the KAOS.

Remember the Mullet of the ’80s — “business in front, party in back”? I think LLM coding produces “Mullet Code”: professional in front, KAOS in back. This piece is about taming the KAOS.

When I wrote about the origin story of UrbanKaoberg in "There And Back Again" two months ago, I talked about the “euphoria of infinite productivity.” I had gone from not writing code professionally for 35 years to building a working Macro/Micro financial research terminal in less than a month without needing to personally write a single line across almost 100,000 lines of code. It felt like discovering a superpower, and in many ways, it was. What I did not fully appreciate at the time was that superpowers can come with a large blast radius.

Since that first piece, the project has kept compounding. UrbanKaoberg is now north of 1,900 commits. The codebase is around 160,000 lines across more than 850 files, with nearly 90 API files. But the raw numbers are not the interesting part anymore.

The interesting part is that the nature of the work changed. The first three weeks were mostly feature creation: charts, dashboards, watchlists, DCFs, data provider abstraction, news, AI analysis, portfolio tools, admin panels, and every other shiny object my three decades of market experience wanted to see in a financial terminal.

These last 6 1/2 weeks have been about something much less glamorous: the plumbing that determines whether a product can be trusted: where numbers come from, how stale they are, what happens when a data source fails, which parts of the app are allowed to make decisions, how users are protected, and how to keep one enthusiastic AI-generated fix from accidentally breaking something three rooms away. In other words, the work shifted from making the thing look “professional in front” to cleaning up after the “party in back” that non-deterministic LLM coding left in its wake.

Mullet Code

In short, vibe coding produces what I call “Mullet Code.”

If you grew up in the ’80s, you remember the mullet. Some of you may even have had one. I will neither confirm nor deny anything about certain old photographs and will just say that I was (and still am) a headbanger (albeit with much less hair today). The haircut had a certain deranged internal logic: from the front, it could almost pass for respectable; from the back, PARTAY BABY!

Just as AI-generated text and images can produce “AI slop,” LLM-generated code can produce its own special genus of slop: code that looks respectable from the front but turns into a complete mess in back.

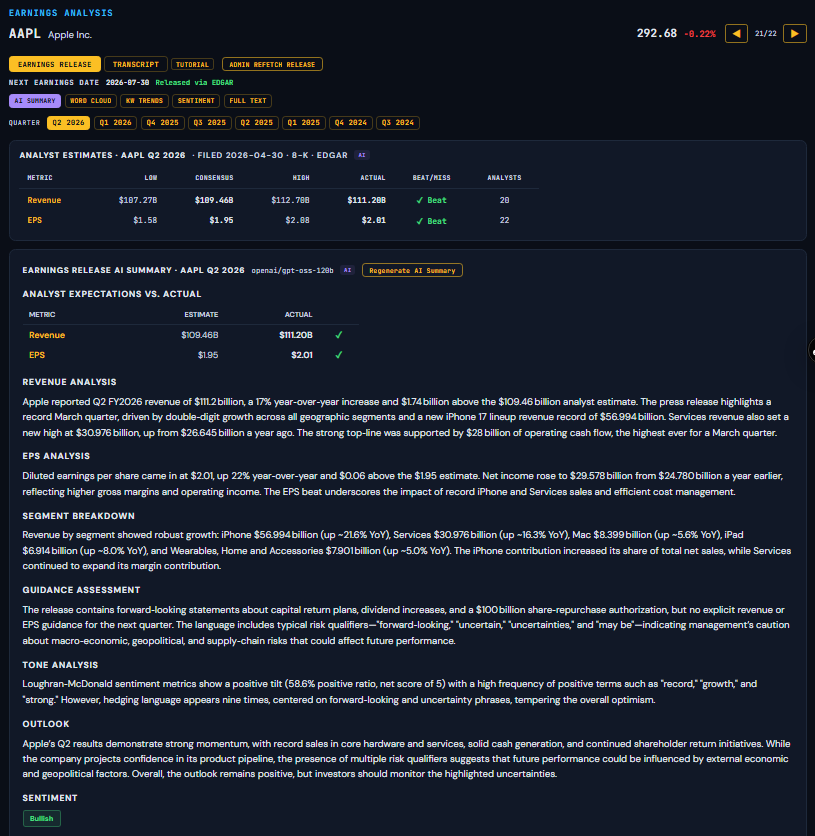

That is exactly what early AI-generated software can become if you are not careful. From the user’s point of view, UrbanKaoberg looked pretty professional after the first three weeks, replete with dense financial data, interactive charts, multi-chart mode, macro release dashboards, earnings analysis, capital structure, DCF models, portfolio analytics, admin tooling — the whole thing looked like a serious financial terminal. But behind the GUI, I started recognizing “under-the-hood” problems multiplying like the "Tribbles" of Star Trek fame.

One screen would get a company’s market value from one place. Another would calculate it from price and shares. Another would trust a data provider’s pre-packaged field. Another would show a blank if its favorite source came back empty. All of those decisions could be locally defensible, and that is what made the problem so insidious. Each local fix made sense in isolation. Each panel worked. Each fallback had a reason. But all of them could still be labeled “Market Cap,” which meant the app no longer had one internal definition of a supposedly basic financial metric.

That is not a syntax issue. It is not even a normal code-quality issue. It is an epistemology issue — yes, an obnoxiously pretentious word, but the right one here. What does the app know? How does it know it? And who gets to decide what is true?

Those sound like questions from a philosophy seminar, but in a financial terminal they become very practical very quickly. Is this earnings number GAAP or adjusted? Is this margin based on the last twelve months or the last fiscal year? Is this debt number coming from a filing, a vendor, a company supplement, or a local calculation? Is this beta from one provider, another provider, or something computed inside the app? Is this metric meaningful for Apple but nonsensical for a CLO equity fund? If the app cannot answer those questions, then the polish of the GUI is beside the point. A beautifully rendered wrong number is worse than an ugly blank cell.

The more interesting competitor analysis question to me:

How many of the current crop of vibe-coded financial terminals actually solve these problems given the complexity of the underlying solutions, especially in a non-deterministic vibe-coded world?

My bet and UrbanKaoberg’s bet: very few.

In my experience, even Bloomberg — widely considered the gold standard of market terminals — can struggle with these issues, especially when years of code sprawl and feature bloat leave different parts of the app calculating the same metric differently.

My goal with UrbanKaoberg is to prevent this from happening.

The Brave New World of LLM Coding

In “There And Back Again,” I wrote about the Euphoria of Infinite Productivity, and I stand by that. AI coding is astonishing. The compression of time is real. A motivated domain expert can now build things that would have required a full engineering team not long ago. That is not hype; I have been living it every day.

But there is a shadow side to this productivity that I did not emphasize enough the first time around:

When the cost of adding features collapses, the cost of adding architectural inconsistency collapses too.

In the old world, if you had to spend three weeks hand-coding a feature, you had a

lot of time to think about whether it belonged. You might draw a flowchart first, ask where the data should live, bounce ideas off fellow engineers, or decide the feature was not worth it. In the AI world, you can have the first version running before lunch, which sounds great until the fifth “before lunch” feature quietly contradicts the second one.

This is the part of vibe coding that does not get enough discussion. The failure mode is often not spectacular. It is not always the app crashing, and it is not always hallucinated code that obviously fails. The more dangerous failure mode is credibility.

Each feature works, each fix makes sense, each fallback is reasonable, each cache helps, and each provider integration solves an immediate problem. Then, one day, you discover that the same financial metric means different things depending on which tab the user clicked. That was when the architecture migration became unavoidable, not because I wanted pretty diagrams, but because I no longer trusted just handing the keys to an LLM coder and letting it run rampant without guardrails.

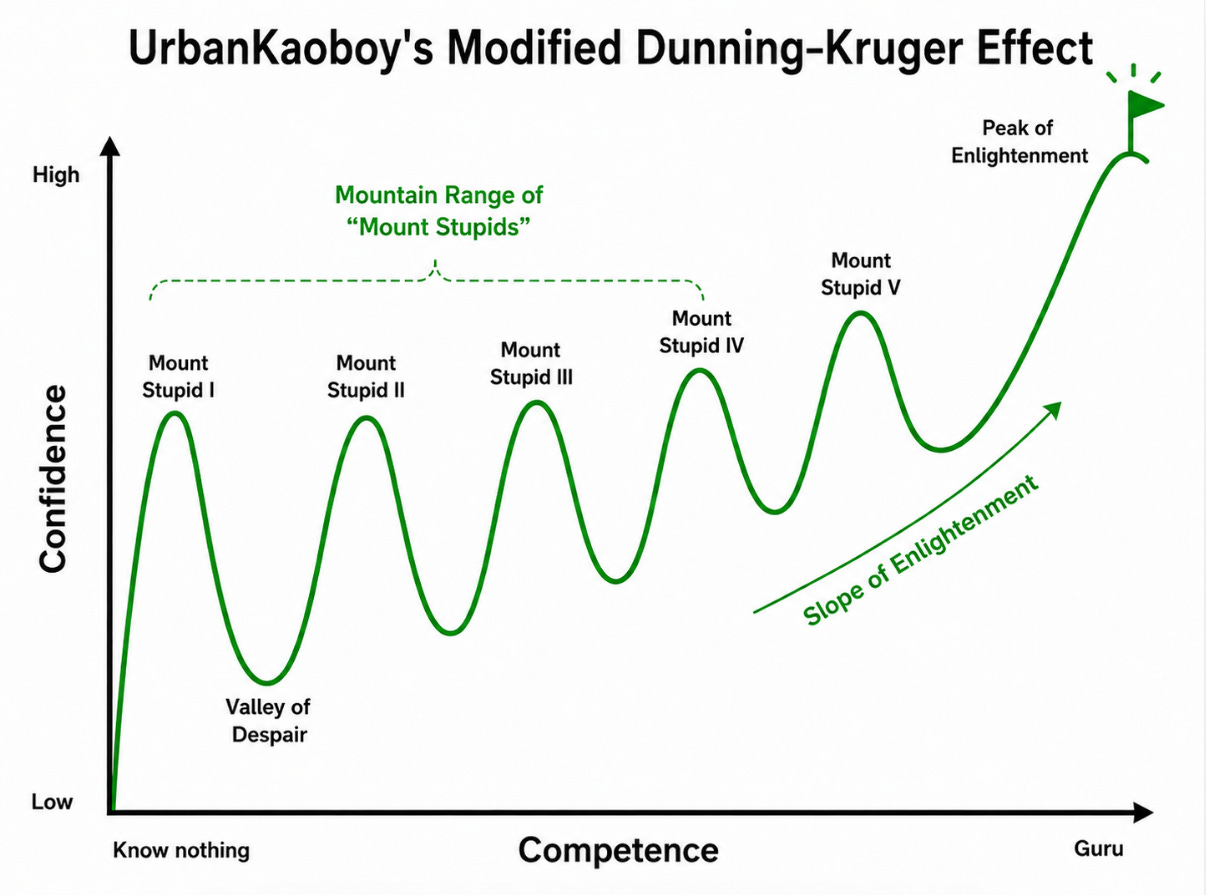

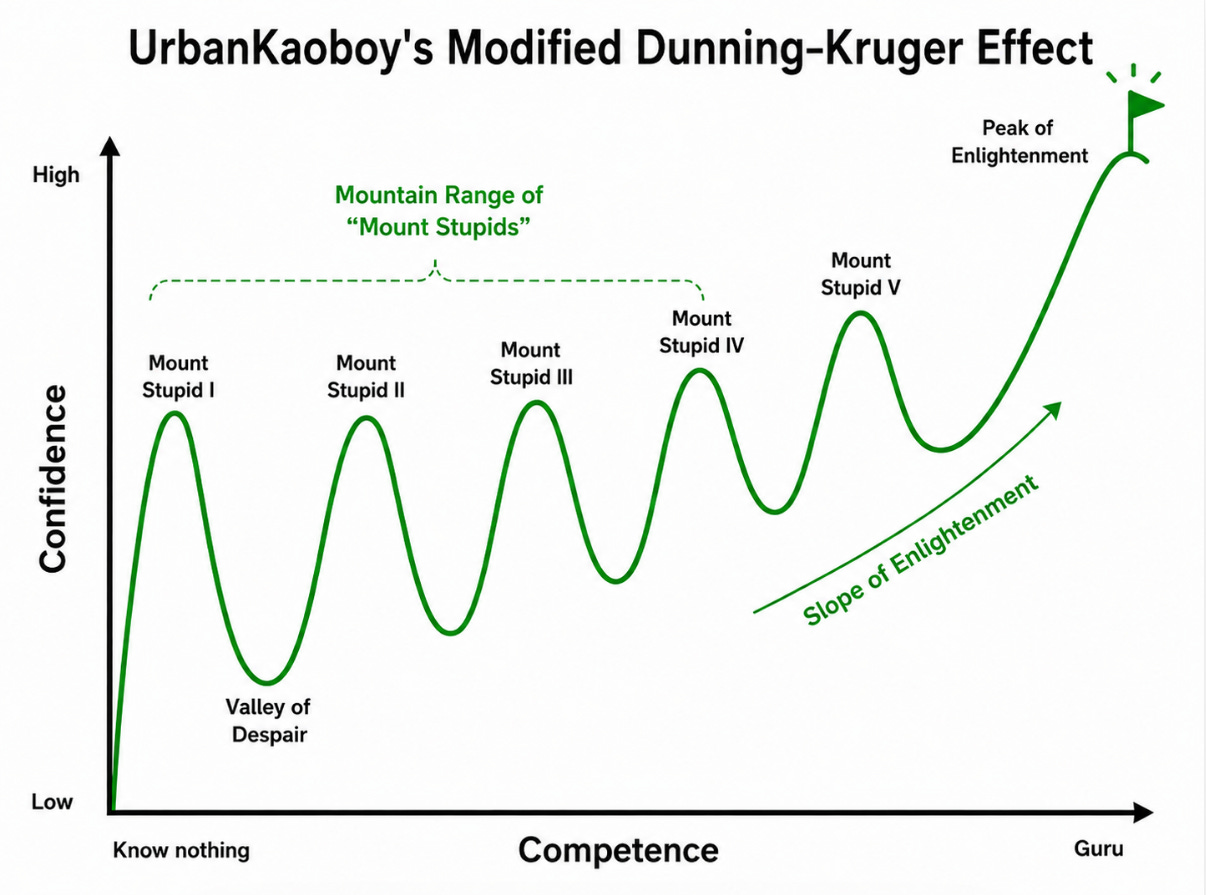

An Entire Mountain Range of Mount Stupids

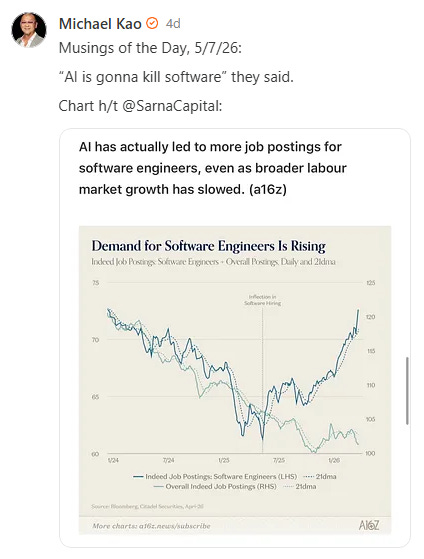

In my first essay, I used the “Mount Stupid” chart to describe the journey from euphoric AI productivity into the Valley of Despair. It turns out Mount Stupid is not a single peak. It is an entire cursed mountain range, because the non-deterministic aspect of LLM coding can create a gigantic blast radius of bugs.

The first Mount Stupid was, “I can build a financial terminal in three weeks!” The second Mount Stupid was, “The terminal works, therefore the architecture must be fine.” That second one was more dangerous, because a demo can work with weak architecture. A personal tool can work with weak architecture. Even a beta product can work with weak architecture for a while, especially if the founder is around to babysit it. But a commercial financial product cannot rely on babysitting. It needs boring reliability, a phrase that has become increasingly important to me. Not exciting reliability, not clever reliability, not “look what the AI did” reliability. Just boringly correct and boringly debuggable software.

One of the hardest habits to break with AI coding is the instinct to say, “Fix this,” because the AI usually can. Blank panel? Fix it. Broken chart? Fix it. Provider timeout? Fix it. Wrong label? Fix it. Stale data? Fix it. But at some point, “fix it” becomes the wrong instruction.

The better question is, “What class of failure allowed this bug to exist?” That is the shift from coding to engineering. A blank market-value field in one part of the app was not just a blank field. It was evidence that two parts of the product had developed two different habits for answering the same question. One surface showed nothing. Another showed a number. The user quite reasonably asks, “Which one is right?” That question is deadly for a financial terminal. The answer cannot be, “Whichever panel you happened to open.”

Asking the wrong question is not even the worst part of LLM coding. The much more insidious problem is that despite my best efforts at designing abstraction into my project, I found it difficult to constrain the LLM’s behavior in respecting the abstractions that I created. Worse, I found that its debugging fixes were haphazard, and more often than not, one fix here would break three things elsewhere in the codebase.

As my codebase grew with all the new features I added, I was learning very quickly that behind the nice front-end, there was Mullet Code behind the facade, and that I needed to figure out how to rein in the KAOS ASAP.

Containing the Blast Radius of Mullet Code

Because it’s been decades since I obtained my degree in Electrical Engineering & Computer Science, I’ve been wondering how they teach the subject now given this Brave New World of non-deterministic LLM coding.

I consulted with my niece, a recent computer science grad working for AWS. I also consulted with a good friend who is a professional coder. I was surprised that they both recommended an “oldish” textbook entitled Principles of Computer System Design written by Saltzer and Kaashoek in 2009, which I began to study assiduously.

One immediate learning stood out:

If the LOC (Lines of Code) = N, the BugCount is proportional to N. Makes sense.

DebugTime, however, is proportional to N x BugCount = N^2. Not good.

I was experiencing this in real time — not only was this book from almost 20 years ago spot-on, the constant bug regressions caused by the non-deterministic nature of LLM coding felt like it was “one step forward, 3 steps back.” I was in debugging hell.

The canonical computer science solution to this problem is to modularize a growing codebase into K modules, which can reduce the DebugTime by a factor of K, where:

DebugTime = (N^2)/K

By mid-April, the UrbanKaoberg codebase had become large enough that I needed a stronger operating model, because the blast radius of non-deterministic LLM coding was out of control. Part of my big re-architecture project was to increase the modularity (K) of UrbanKaoberg’s internals to reduce my DebugTime.

I needed to re-architect the codebase and give the LLMs a smaller, fenced-in yard to play in. I have no interest in building a cathedral to software architecture just for the sake of “proper design,” but it was clear to me that the Brave New World of enlisting LLM coding agents required an entirely new method of engineering. I needed a practical containment system.

The governing idea was simple: a bug, refactor, or AI hallucination in one part of the product should not silently break unrelated parts of the product. That sounds obvious, but it is not automatic when AI can edit dozens of files in a session and sound extremely confident while doing it. AI sycophancy is real, and it can be a disaster when debugging.

The answer was to make ownership clearer: data quirks belong below the user interface, common plumbing belongs in shared places, and the screens the user sees should not be rummaging through raw vendor payloads like raccoons in a dumpster.

The point is not to create modules blindly just to increase module count. The point is ownership. Provider quirks should not leak into the GUI. Fetching logic should not be buried inside a thousand-line screen. A user-facing view should render a stable financial claim, not go spelunking through whatever data structure shape a data vendor happened to return that morning. This is not computer science for the sake of computer science. It exists because the failure modes were real.

A document saying “please follow the architecture” is not enough. I have learned that if a rule matters, the enforcement mechanisms cannot be toothless. So I added automated guardrails to enforce some of those boundaries.

This matters because AI agents are very good at finding the shortest path to green, which is both their strength and their danger.

If the shortest path is wrong, the guardrail has to block it. I do not want an agent “remembering” some architectural preference because I wrote it in a document three weeks ago. I want the system to object when the rule matters. In finance terms, this is just risk management. You do not assume the trader never makes a mistake. You build limits.

Lesson:

AI makes local fixes cheap, but local fixes without proper engineering make global damage expensive. The trick is not to slow the machine down for the sake of ceremony; it is to put guardrails where the blast radius is real.

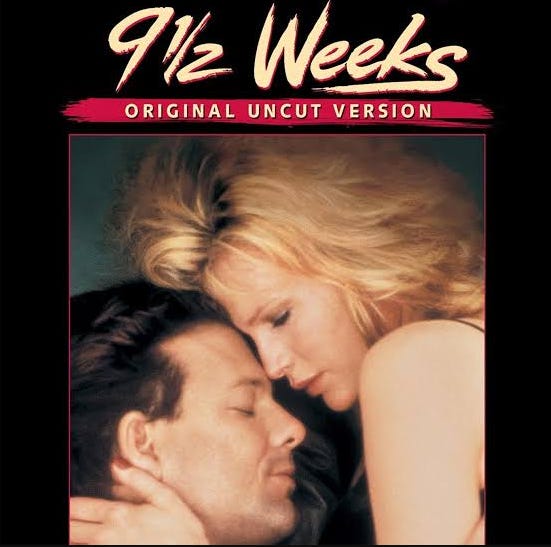

UrbanKaoberg’s 9 1/2 Weeks

UrbanKaoberg is now 9 1/2 weeks old, and although I can’t quite promise you the same level of titillation as the eponymous ’80s throwback flick promised, what follows is a play-by-play of the juicy details. Proceed at your own risk.

One of the biggest shifts in the codebase has been the introduction of “Domain Contracts,” a painfully dry name for a simple but crucial idea: when data moves through the app, it needs to carry its passport. When data crosses an important boundary, it should carry enough context for the rest of the app to understand it honestly. A price is not just a price. An earnings actual is not just a number. A macro release value is not just “latest.” Each carries context that determines whether it can be trusted, compared, displayed, or interpreted. If that context gets stripped away, the UI starts guessing — and guessing in a financial terminal is unacceptable. Worse, it may not even realize that it is guessing.

Another major area of focus has been Data Freshness. If a quote came from cache, the timestamp should reflect when the data was actually fetched, not when the screen happened to redraw.

Two places where Data Freshness matters a lot in UrbanKaoberg are Macro Releases and Earnings. That is where users expect the terminal to know when the number has actually dropped, not merely when CNBC started screaming about it. That sounds simple until you realize the app is hostage to underlying data sources that do not always update the instant the number hits the headlines. So the real job is not pretending to be omniscient. The real job is knowing what has arrived, what has not, and what should be labeled honestly.

For example, if the Macro Dashboard says it is showing the latest release, the app has to know whether today’s number has really arrived or whether it is still showing yesterday’s truth in today’s suit.

Earnings were even messier. Different companies report at different times, in different formats, with different definitions of what the “real” number is.

Think about what an Equity Analyst needs to consider when evaluating an earnings drop, where a beat or miss can flip depending on whether you are looking at GAAP, adjusted, split-adjusted, company-reported, calendar-aligned, or statement-derived numbers. If a market terminal shows a beat/miss verdict without understanding the basis of the numbers, that is not analysis. That is a coin flip in a nice font. The goal is not omniscience. The goal is intellectual honesty at machine speed: show the number when it is properly sourced, caveat it when necessary, and refuse to fake certainty when the data is not there.

UrbanKaoberg’s AI Features have gone through a similar maturation. Early on, I was excited about LLM-generated commentary: Macro release summaries, trade signals, competitive moat analysis, earnings summaries, news sentiment, and so on. I still think those features are incredibly valuable, but the rules have tightened substantially to reduce hallucination risk.

In my opinion, LLMs should be treated as analysts, not sources of truth. That distinction is everything. An analyst can interpret data, summarize it, explain it, and point out relationships. An analyst should not invent the data. In one early failure mode, I caught Macro release commentary containing hallucinated sub-component tables. The prompt asked for useful detail, and the model obliged even when the source data did not contain the claimed breakdown. That is not because the model was malicious. It was doing what these models normally do: producing plausible output. Plausible is not good enough for a financial terminal.

So I built a more disciplined workflow around what the model is allowed to see, what it is allowed to say, and what the product is allowed to show. But the deeper fix was

philosophical: never leave a truth-shaped hole for the LLM to fill. Structured data

first. LLM analysis second. If the structured data is missing, the app should say it is

missing. A clean “not available” beats a confident hallucination every time.

AI Model Redundancy is also very important to UrbanKaoberg’s vision and value proposition. In the same way I wasn’t willing to bet my hedge fund on a single analyst’s acumen, I’m not willing to bet UrbanKaoberg’s intelligence on a single model provider. UrbanKaoberg’s architecture allows it to swap its AI “analysts” at will.

In “There And Back Again,” I wrote about Data Redistribution Rights and my push to build Data Provider Redundancy across providers. As with AI Model Redundancy, not being beholden to any one Data Provider remains one of the most important strategic objectives of UrbanKaoberg. Maybe it’s because I ran a hedge fund that actually tried to hedge unwanted risks, but I find myself layering hedges into UrbanKaoberg as well. I do not want to be hostage to anyone, and I do not want a single commercial data vendor to become existential. I also believe users benefit from seeing that different providers can disagree.

But redundancy is not magic. A Data Provider cascade sounds like plumbing, but it is really judgment encoded in software. It determines how the system behaves when providers disagree, go stale, go missing, or return something that looks suspicious. That is a lot of power.

If Data Provider 1 fails on a release-date endpoint, that is one kind of problem. If Data Provider 2’s earnings calendar lags a release-day actual, that is another. If Data Provider 3 times out on batch quotes, that is another. The UI should not treat all of these as the same blank cell.

I used to think of Data Caching mainly as a speed and cost problem. UrbanKaoberg has a much more disciplined caching architecture now, but the important lesson is not the plumbing. The important lesson is that caching is part of the trust layer.

If a Macro tile says “latest,” the cache policy helps determine whether that word is honest. If a user hits refresh, the app needs to know whether it is actually refreshing truth or just repainting yesterday’s answer with today’s confidence. Data Freshness determines the usefulness of the number. A financial datum without freshness is like a bond quote without a timestamp. It may still be useful, but only if you know what it is.

The Authentication migration deserves its own war story because login looks like a

feature from the outside, but it is really a distributed state machine wearing a button.

AI can generate sign-in screens, role checks, admin gates, webhooks, profile sync, and

session handling very quickly. But authentication failures live in timing gaps. I dealt with all kinds of authentication minutiae: login loops, role-sync issues, session timing gaps, token weirdness, retry storms, and the general joy of discovering that “logged in” is not one state but a small haunted village of states. The code was not “bad” in the obvious sense. But under real traffic and timing conditions, authentication can fail in ways that look like random app instability.

Security has also become front-and-center for UrbanKaoberg. Since March, the project has gone through a much more serious security-hardening phase: access controls, abuse prevention, privacy work, dependency review, entitlement logic, and the boring defensive plumbing that separates a DIY dashboard from a commercial product.

Work In Progress

The current battleground is Fundamentals Data Epistemology. It is probably the cleanest microcosm of the whole journey thus far and perhaps the most ambitious part of UrbanKaoberg’s goal. As mentioned, even Bloomberg can struggle to deliver consistently in this domain.

I am deliberately skipping some of the plumbing here, partly because it would bore normal humans into a coma, and partly because I have no interest in publishing the exact treasure map for every Bloomberg-in-a-browser cosplayer currently armed with a Claude subscription and a dream.

The project started with a simple observation from user screenshots: overlapping equity metrics were inconsistent across surfaces. Multiple surfaces in the product touch fundamentals in some way, but they were not all speaking the same language. Market Cap differed by surface. Enterprise Value had slightly different recipes depending on which module was cooking. Debt-to-Equity could be annual statement-derived in one place and TTM ratio-derived in another. Operating Margin looked wrong until I realized different panels were using different concepts under the same label. This is where the mullet metaphor stopped being funny and started getting expensive. The front-end was polished. The back-end was a mess. Revenge of the Mullet.

So now the work is to define one. The solution is to stop treating metrics as naked

numbers. Every important figure needs more than a number attached to it. It needs enough context for the system to know what it is, where it came from, when it was valid, whether it belongs on the screen at all, and when the app should warn the user rather than pretend precision. That may sound painfully dry and complex, but this is where trust is built.

Sector applicability is a major reason this matters. A metric that is useful for an

industrial company can be misleading for a mortgage REIT. A CLO equity fund does not behave like Apple. A financial guarantor does not report like a SaaS company. A REIT’s operating metrics do not map cleanly to GAAP margins. A bank’s balance sheet is not “levered” in the same sense as an industrial company’s balance sheet. The first version of a financial app tends to treat all tickers as stocks. The second version has to admit that issuers are different animals. That is why issuer classification became important.

After decades of consuming datasets across multiple financial terminals and tools, I have yet to meet the “perfect” one. More often than not, as an analyst, I found it to be more of a headache to reconcile a “prepackaged” dataset’s financials with the actual underlying 10-K of the company I was analyzing.

Accuracy often comes at the cost of efficiency, and vice versa. UrbanKaoberg recognizes this truism and doesn’t want to make promises it can’t keep. The goal is not to over-engineer a perfect sector ontology on day one. The goal is to stop showing false precision. Sometimes the right answer is a value. Sometimes the right answer is a caveat. Sometimes the right answer is suppression. Sometimes the right answer is “not applicable.” A terminal earns trust not only by what it shows, but by what it refuses to pretend.

One of the subtler lessons of the last six weeks is that UI states are data semantics in

disguise. A blank cell is not one state. It could mean the data is loading, missing, inapplicable, stale, disputed, or dangerous to show without context. If the UI renders all of those as the same blank, the app has thrown away truth.

UrbanKaoberg’s goal is to preserve the difference between “we have the number,” “we do not have the number,” “the number does not belong here,” and “the number exists but deserves a warning label.” To a normal person, that may sound like implementation detail. To me, it is the difference between a toy and a terminal.

Another big change since “There And Back Again” is how I’ve developed a “Meta Workflow” between AI Agents. In the beginning, I mostly used them as tireless coders. Now I also use them as auditors, adversaries, and architectural reviewers.

I spend a huge amount of time in the planning stages before actual code implementation. My workflow forces the plan through multiple adversarial review steps. The point is not that the reviewer is always right; the point is that serious designs should survive contact with a hostile reader before they survive contact with users. This process has caught real problems — places where two different failure modes would have been collapsed into one, places where automation could have repeated work incorrectly, places where warnings could have been forgotten, and places where a local fix would have quietly weakened the broader system. One LLM is not always right, but the practice of forcing a design through an adversarial read by another LLM before writing code has been invaluable. This is one of the paradoxes of AI coding: the more AI I use to write code, the more AI-assisted review discipline I need to keep the code honest.

When I coded 35 years ago, I used to think of Documentation as something you write after the real work and dreaded doing it. That view is dead. In an AI-assisted codebase, documentation is operational memory. Good documentation tells the next session what is true, what was decided, what has been superseded, and what must not be casually “fixed” back into a previous bug.

AI agents do not have perfect memory across sessions. They do not automatically know which old plan was superseded. They do not know which decision was made verbally unless it is written down. They will happily resurrect a stale idea if the stale idea is easier to find than the current one. So the repo has to remember. That has changed my workflow dramatically. I now treat important design decisions as artifacts, not vibes, which is ironic given the term “vibe coding.”

Vibe Coding Requires MORE Engineering Not Less

In the first essay, I wrote about returning to coding after 35 years. The last six weeks

have been about returning to engineering. Coding is making the machine do something. Engineering is deciding what the machine should be allowed to do, how it should fail, what it should remember, and how the next person can debug it.

In 1992, I learned this through pain. A buggy release into a trading desk is an “educational” experience. Traders are not shy with feedback, especially when your GUI is slowing down their workflow.

In 2026, I am learning it again through a different kind of pain. The AI can generate more code in a day than I could have written in months, but the judgment burden does not disappear. It moves up a level. I am no longer asking, “Can this be coded?” Almost everything can be coded. The better question is, “Should this concept exist here?”

That question has become the heart of the work. Should the UI own this fallback? Should this provider field be authoritative? Should this value be cached? Should this metric be displayed for this issuer? Those are engineering questions, and they are now the main work.

In my opinion, AI coders still do not obviate good human engineers just like AI writers still do not obviate good human authors.

SaaSpocalypse (Not) Now

My view on the SaaSpocalypse narrative has sharpened. I still believe AI dramatically

lowers the cost of building software. That is not theoretical. I have lived it every day

for two months. A domain expert can now build a serious product without hiring a

traditional engineering team first. That changes the startup equation, the economics of software creation, and the value of domain expertise.

But I am even more convinced that the lazy version of the SaaSpocalypse thesis is wrong. AI does not eliminate the hard parts of building a software business; it just moves the pain to more interesting places.

In the first three weeks, I hit product scope, user workflow inertia, data licensing, and feature bloat. In the next six weeks, I hit architecture, provenance, cache semantics, auth edge cases, provider reliability, security hardening, and trust boundaries. The first 80% got faster. The last 20% became more obviously decisive.

If everyone can generate the visible surface, then the durable moat moves behind the

surface. I am referring to data rights, workflow understanding, domain judgment, trust, security, reliability, operational maturity, and the ability to know when not to show a number. That last one matters more than people think.

I still think AI is a major threat to lazy SaaS. If your product is mostly commodity workflow screens wrapped around shallow domain understanding, you should be nervous. If your moat is that it used to be expensive to hire engineers to build forms and dashboards, that moat is shrinking.

I think of a SaaS business model as a recurring “tax” that is levied in exchange for a service. The complexity of service obviously dictates the level of tax that the customer base will bear. AI coding has empowered customers to build DIY solutions in many cases, putting pressure on the tax these companies can levy. However, as I’ve been learning, there are limits to what DIY solutions can achieve without serious engineering challenges. Gone are the days when these companies can levy exorbitant software taxes without challenge. But lower taxes — or slower tax hikes — do not automatically mean SaaS companies are marching toward insolvency, despite what markets seemed to be implying over the last several months.

Serious vertical software is not just screens. It is accumulated domain judgment encoded into workflows, data contracts, edge cases, integrations, permissions, audit trails, and trust boundaries. That package is much harder to clone. And even if someone copies the screens, they still have to survive the engineering trench warfare behind them.

A recent a16z piece by Seema Amble titled "Is Software Losing Its Head?" reaches a similar conclusion from the enterprise SaaS side of the chessboard. Her point is that as software goes “headless” in an agentic world, the old UI moat weakens, but the need for defensibility does not disappear; it migrates into data models, permissions, workflow logic, compliance, proprietary data generation, and execution loops.

This is exactly the lesson that taming the Mullet Code within UrbanKaoberg has been beating into my skull for the last six weeks:

Vibe coding makes it easy to make the front look professional, but the real value is in the judgment, data provenance, permissions, edge cases, and trust boundaries buried in the back.

Taming the Mullet

In “There And Back Again,” I wrote that if UrbanKaoberg works, it will not be because AI wrote the code; it will be because I knew what code needed to be written. I still believe that, but I would amend it now: it will be because I knew what code needed to be written, and where it belonged.

That second clause is the architecture lesson. A feature in the wrong layer becomes debt. A fallback in the wrong surface becomes inconsistency. A provider assumption in the wrong file becomes invisible coupling. A number without provenance becomes a trust problem. AI can generate any of these quickly. The human has to protect the system from accumulating them.

If the first three weeks were the euphoric climb up Mount Stupid, the last six weeks

were the messy ups and downs of discovering the multiple peaks of the Mount Stupid Range. I am far from the Peak of Enlightenment, but at least each subsequent valley is shallower!

UrbanKaoberg’s codebase is much stronger now. The layers are real. The boundary checks pass. The module registry is cleaner. The contracts are accumulating where actual bug classes justify them. The roadmap is more disciplined. Security is no longer an afterthought. The app is getting less magical and more reliable. That is progress, not the exciting kind, but the most substantive kind.

I still have a lot to do, but the trajectory is right. The project has moved from “Can I build it?” to “Can I trust it?” — which I think is the most important question.

Final Lesson:

Vibe coding is real, powerful, creative, addictive, and occasionally terrifying. It lets a domain expert build at a speed that would have seemed absurd a few years ago. It collapses the distance between idea and implementation. It gives almost anyone the ability to hack together an MVP at absurd speed.

But vibe coding also creates Mullet Code risk. The front can become professional long before the back becomes disciplined. That is the trap. If you stop at the demo, you may never notice. If you invite real users, handle real data, touch real payments, and ask people to trust the output, you will notice.

The cure is not to stop using AI. The cure is to raise the engineering bar: clearer ownership, tighter boundaries, honest data states, stronger security, better tests, and a system that knows when confidence is earned rather than assumed.

In the first phase of UrbanKaoberg, AI helped me rediscover coding. In the second phase, it forced me to rediscover engineering. And if this thing ultimately works as a business, it will not be because the AI wrote 160,000 lines of code. It will be because I figured out how to tame the Mullet!

If you haven’t tried UrbanKaoberg.com yet, please check out the free beta and let me know what you think:

https://urbankaoberg.com/

ICYMI, here is Part I of the UrbanKaoberg saga: